This technical brief on Motion Studies and SMPTE Time was provided by our valued supplier EON Instrumentation.

Motion studies modelled from recorded video is a common use in scientific and engineering test pursuits. Video imagery captures model gas expansions, mechanical processes, and more recently live motion of people for animation. Since the time difference between frames is needed to calculate velocities and accelerations of objects of interest, the time that each frame is captured must be precisely known.

SMPTE offers an embedded time code. It was originally created for synchronisation between multiple audio and video sources to ensure that playback times we identical. Synchronisation facilitates editing and splicing of other scenes or content into a single master smoothly and with fidelity.

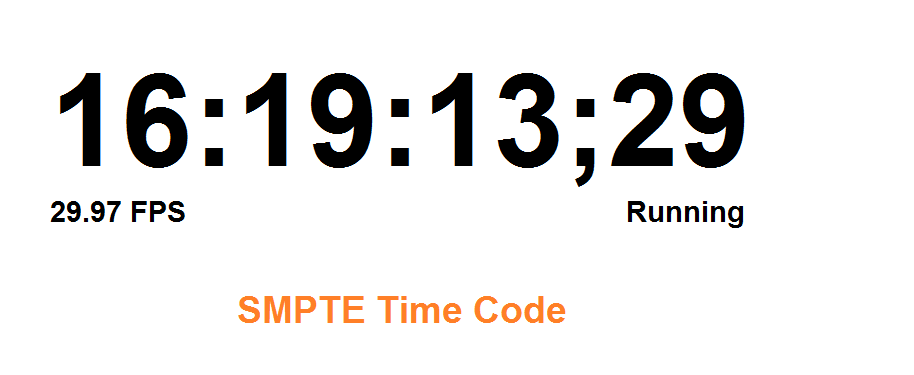

SMPTE Time Code

There are two parts to SMPTE time code, the absolute time (HH:MM:SS) and the frame number (FF). The absolute time may have varying degrees of accuracy, but the frame number itself is incremented with the frame sync. Frame count with respect to time is arbitrary unless the camera synchronised to a time source. Even then image capture varies from camera to camera, with imaging technology and with exposure settings.

The absolute SMPTE time is set at HH:00:00:00 (on the hour). A SMPTE time code generator will likely jam synchronise (force fractional seconds and frame count to zero) on the hour. Even if each second is jam sync’d, fractional seconds must still be estimated. Complicating matters further, most video sources deliver frames at rates that do not divide into seconds as integers. Common video sources deliver frames at 60/1.001 or 30/1.001 frames/sec; e.g. NTSC rates.

Consequently, slightly less than 60 frames would fit in one second of a 1080p/60 video stream. The 60th frame would be 1.00001667 seconds rather than at 1.000000 seconds. The SMPTE studio specifications allow the frame rate and jitter to be ± 0.01% (i.e. 29.97±0.0003). The time jitter could be ±3µsec. Therefore, frame 60 could be at 1.0000197 or 1.00001367 seconds after fame 0. A jam sync at this point lops off this error; the second is correct, but what exactly is the absolute or even relative fractional second? Was the start of frame 0 at 0.000000 seconds? It may not be known. Therefore the exact time that any one frame is captured takes a bit of math, involves cumulative errors that may be in determinant and a good guess. In some engineering test circles, SMPTE time has been called “some time”.

An Alternative to SMPTE Time Capture

An alternative to SMPTE time captures high resolution absolute time on every frame. A time standard like GPS could be used as a reference. Sampling time at a consistent point in the frame will yield accurate time differences between frames as well as accurate knowledge of when each frame was captured. Both measures are needed to model motion and coordinate measurements from many sources.

EON Instrumentation HD-SDI Inserters & Video Recorders

All EON Instrumentation HD-SDI inserters and video recorders stamp frames at the instance of vertical sync. The time is recorded to a resolution of 1 µsec and an accuracy of 2±1 µsec per frame. This highly accurate time may be embedded as a SMPTE 291M KLV metadata space formatted in accordance with MSIB 0605.3 and may concurrently be placed in overlay as human readable text. Delta times as well as actual time of the frame will be correct without correction for the actual frame rate. When studying motion, time stamping this way will provide frame to frame time accuracy of ± 0.0008%. Accurate and certain.

Take a look at this HD-SDI inserter recorder. The recorder captures video clips uncompressed at up to 1080p/60. It timestamps each frame. With a 1 ±2 µsec accuracy. Synchronised to an internal GPS receiver. Each clip provides clear image. Just as the camera delivered them. Therefore providing accurate time frame to frame. To allow the study, model and predict motion behaviours.

For further information contact us.